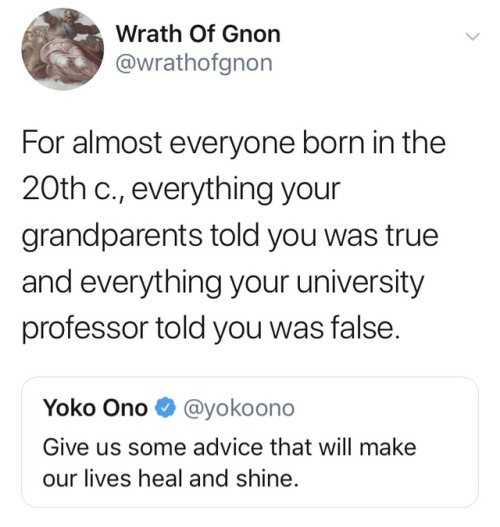

Agency isn't just the ability to choose among options or produce language on command. It's the felt experience of your own judgment. It comes from working with a thought long enough for something honest to emerge. With AI, we may still feel informed and productive while the deeper habits, dare I say "agency intelligence," begin to weaken.There is also an economic layer to this that carries psychological weight. A commodity can be priced, tiered, throttled, advertised, and differentiated. Once intelligence enters that framework, the old questions of human thinking begin to mix with the logic of the market. And I think that's exactly where Sam Altman and OpenAI sit—at least based on his earlier quote.The questions abound. Who gets the better model, the deeper reasoning, the larger context window, the more persistent memory? Who can afford a more capable form of synthetic cognition? That may sound like a technical issue, but it also changes how people imagine themselves in relation to thought. The self becomes less the source of intelligence and more the customer of cognitive support. To me, that's not a trivial shift. It encourages an insidious dependence with no track record.I suspect many people already feel this in everyday life. The moment before writing becomes prompting. The moment before reflection becomes querying. The unfinished thought no longer remains unfinished for long because something is always available to complete it—and at a cost. That is useful, and often impressive, but it is also worth watching carefully. The danger of AI isn't "simply" that it replaces human thought. It may be that it gradually makes thought feel less like something we do and more like something we access and buy.

13 March 2026

Insidious.

Subscribe to:

Post Comments (Atom)

No comments:

Post a Comment